I found the required documentation here and a sample here. I did adapt that sample to:

- not require a passphrase as mentioned in the Synology documentation. This would break the build process.

- to generate some entropy without the rng-tools (not available out-of-the-box for Synology)

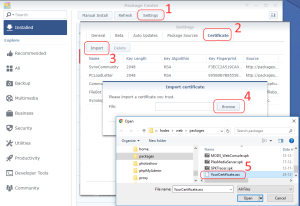

Obviously, you need gpg. I am using gpg2 made available by installing the package gpgme via ipkg as explained here.

Create keys in your personal key rings

First, put the parameters to be used by gpg into a file named ‘gpgKey‘ (don’t forget that it must be linux compliant => not CRLF (/r/n) but only LF (/n)*). Here under, I am using RSA 2048. Use your own name, comment and email address.

Key-Type: RSA

Key-Length: 2048

Subkey-Type: RSA

Subkey-Length: 2048

Name-Real: Type Here your name

Name-Comment: Type Here some comment

Name-Email: Type Here your email

Expire-Date: 0

%no-protection

%commit

Next, save the following script in a file named ‘createGpgKey.sh’ (don’t forget about CRLF 😉 )

#!/usr/bin/env bash

if [ ! -f ~/.gnupg/gpg.conf ]; then

mkdir -p -m 0700 ~/.gnupg

touch ~/.gnupg/gpg.conf

chmod 600 ~/.gnupg/gpg.conf

tail -n +4 /opt/share/gnupg/gpg-conf.skel > ~/.gnupg/gpg.conf

touch ~/.gnupg/{pub,sec}ring.gpg

fi

#generate some entropy

(dd if=/dev/zero of=/dev/null) & pid=$!

#generate the key (and a folder to be used later 😉

mkdir -p -m 0700 gpg

gpg2 –verbose –batch –gen-key ./gpgkey

#kill the entropy generator

kill $pid

Then, move those two files into a public shared folder of your Synology. Ex.: ‘\\<Your Nas>\temp‘

And open a ssh console as explained here (no need to enter the root mode) to go into the shared folder: cd /var/services/temp

Finally, here is the output you should see when running your script: sh createGpgKey.sh

gpg: skipping control `%no-protection’ ()

gpg: writing self signature

gpg: RSA/SHA1 signature from: “1418FFE0 [?]”

gpg: writing key binding signature

gpg: RSA/SHA1 signature from: “1418FFE0 [?]”

gpg: RSA/SHA1 signature from: “71C22B00 [?]”

gpg: writing key binding signature

gpg: RSA/SHA1 signature from: “1418FFE0 [?]”

gpg: RSA/SHA1 signature from: “71C22B00 [?]”

gpg: writing public key to `/var/services/homes/<current user>/.gnupg/pubring.gpg’

gpg: writing secret key to `/var/services/homes/<current user>/.gnupg/secring.gpg’

gpg: /var/services/homes/<current user>/.gnupg/trustdb.gpg: trustdb created

gpg: using PGP trust model

gpg: key 1418FFE0 marked as ultimately trusted

The keys are now stored into your home’s gpg folder: ls ~/.gnupg/

You can check that the key id displayed above are stored using:

gpg: checking the trustdb

gpg: 3 marginal(s) needed, 1 complete(s) needed, PGP trust model

gpg: depth: 0 valid: 1 signed: 0 trust: 0-, 0q, 0n, 0m, 0f, 1u

/var/services/homes/<current user>/.gnupg/pubring.gpg

——————————————–

pub 2048R/1418FFE0 2017-12-26

uid Your Name (Your Comment) Your Email Address

sub 2048R/71C22B00 2017-12-26

gpg: checking the trustdb

gpg: 3 marginal(s) needed, 1 complete(s) needed, PGP trust model

gpg: depth: 0 valid: 2 signed: 0 trust: 0-, 0q, 0n, 0m, 0f, 2u

/var/services/homes/<current user>/.gnupg/secring.gpg

——————————————–

sec 2048R/1418FFE0 2017-12-26

uid Your Name (Your Comment) Your Email Address

ssb 2048R/71C22B00 2017-12-26

*: the trick to replace all CRLF by LF is to edit the file with notepad++, use the menu Encoding > Convert to UTF-8, and next do CTRL-H to search and replace /r/n with /n using the search mode = Extended. You can finally check that there is no more CRLF by using the menu View > Show Symbol > Show End of Line.

NB.: the script could be a bit slow if there is not enough entropy (up to 45sec on my NAS)… (to download a big file within the download station can help 😉

You can now export your public key using either your name, your email or the key id (from illustration here above, the key id is 1418FFE0): gpg2 –armor –export 1418FFE0 > gpgkey.asc

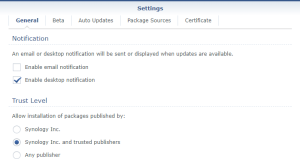

This file ‘gpgkey.asc’ must be copied in the root folder of your SSPKS server, so it will automatically be added as a trusted publisher for anyone who is adding your SSPKS url in his list of “Package Sources”.

Now, assume that you have the package to be signed (a spk file) and the ‘CodeSign.php’ script from Synology’s toolkit (it can be downloaded from github) in the same shared folder ‘temp’ used previously… Then, you can sign this package using: php CodeSign.php –sign=YourPackage.spk –keydir=~/.gnupg –keyfpr=1418FFE0

Create keys in specific key rings

Instead of using your personal key rings, you could prefer to store your keys in a dedicated folder… To do so, you can use the following parameters in your gpgKey file:

Key-Type: RSA

Key-Length: 2048

Subkey-Type: RSA

Subkey-Length: 2048

Name-Real: Type Here your name

Name-Comment: Type Here some comment

Name-Email: Type Here your email

Expire-Date: 0

%no-protection

# Write public key into a file pub.gpg

%pubring gpg/pubring.gpg

# Write secret key into a file sec.gpg

%secring gpg/secring.gpg

%commit

Once the script createGpgKey.sh executed, you will find two files pubring.gpg and secring.gpg in the folder gpg (created by the script that run in /var/services/temp/).

gpg: Generating a basic OpenPGP key

gpg: skipping control `%no-protection’ ()

gpg: writing public key to `gpg/pubring.gpg’

gpg: writing secret key to `gpg/secring.gpg’

gpg: writing self signature

gpg: RSA/SHA1 signature from: “F93A0789 [?]”

gpg: writing key binding signature

gpg: RSA/SHA1 signature from: “F93A0789 [?]”

gpg: RSA/SHA1 signature from: “8AAC41CF [?]”

gpg: writing key binding signature

gpg: RSA/SHA1 signature from: “F93A0789 [?]”

gpg: RSA/SHA1 signature from: “8AAC41CF [?]”

You can now sign your package using: php CodeSign.php –sign=YourPackage.spk –keydir=/var/services/temp/gpg –keyfpr=F93A0789

You can also check your keys using:

- gpg2 –no-default-keyring –secret-keyring ./gpg/secring.gpg –keyring ./gpg/pubring.gpg –list-secret-keys

- gpg2 –no-default-keyring –secret-keyring ./gpg/secring.gpg –keyring ./gpg/pubring.gpg –list-keys

And you can export your public key to be used in your SSPKS using:

- gpg2 –no-default-keyring –keyring ./gpg/pubring.gpg –armor –export F93A0789 > gpgkey.asc

Create keys with a passphrase

In order to provide a passphrase, replace ‘%no-protection‘ in the parameters file with:

Passphrase: Type Here your passphrase

![]()