These are a few notes for myself. I did indeed struggle to start with my DJI Spark

Power on/off

The power button is:

- on the top-right for the RC (the Remote Control).

- on the battery for the aircraft (the DJI Spark)

Before switching on the RC or the aircraft, charge them by plugging the batteries on the battery loader + connecting the remote with the white USB cable on the same loader.

Both the RC and the aircraft can be switch on by:

- pressing the power button once and immediately after

- pressing+holding it until the 4 leds are on (White leds on the RC, green leds on the aircraft).

Once the RC or the aircraft switched on, the amount of leds being on represents the power level (1, 2, 3 or 4). The aircraft will play a short music. Its two front led should keep red while the two back leds will blink quickly.

The switch off the RC or the aircraft, do the same operation as to switch it on.

If the aircraft is switch off but not the RC, this one will start to beep after some time. Press any button to stop the beeps.

Leds

Front Leds (same side as the camera) are used to show the orientation.

- Solid red (= not flashing) once the aircraft is turned on, to indicate the nose of the aircraft.

Back Leds (same side as the battery) are used to show status (See User Manual for details):

- Slow green flashing = Positioning mode with GPS

- Two green flashes = Positioning mode with Vision System (no GPS signal)

- Slow yellow flashing = Neither GPS signal nor Vision System (Ex: not enough light or uniform surface)

Update the firmware.

I found it much more easy (stable) to update the aircraft via a PC (using the DJI Assistant 2 software) than via a mobile (using the DJI GO 4 app).

- Install DJI Assistant 2, to be found here, on your PC and run it.

- Plug a battery into your DJI Spark.

- Connect the DJI Spark to the PC using the usb cable. The usb connector is behind a small trap, at the back of the device, above the battery.

- Turn on the DJI Spark. DJI Assistant 2 will soon detect it.

- Do a Firmware update.

- Redo this for each battery. Indeed, they are Intelligent Flight Batteries that need also a firmware

- Connect next the Remote Control to the PC.

Notice: the remote control cannot be updated via DJI Assistant 2. It may only be updated from GJI GO 4, installed on a mobile device.

Official Videos to update firmware:

- with DJI Assitant: https://youtu.be/PRlV8fsVV0U

- with DJI GO 4: https://youtu.be/HZE-fP28htI

Some users report that they had to install an older version of the DJI Go 4 on their mobile, available here:

https://www.apkmirror.com/apk/dj … droid-apk-download/

Solve trouble to link RC and Spark.

Linking the RC and the aircraft using the DJI Go 4 app can be difficult… if it’s the case, you can do it manually (without the app)

Reset first the DJI Spark with the steps 1 to 5:

- Power up your aircraft .

- Press next its power button for 9 seconds (until you hear 1, next 2, and finally 3 beeps)

- Restart your aircraft (power off and on again)

- Press next its power button for 6 seconds (until you hear 1 and next 2 beeps)

- Restart your aircraft one more time.

- Now power up your remote (just now) and keep it next to the DJI Spark (< 20cm). If the led on the left is red, it means the RC needs to be linked with the aircraft.

- Press the power button of the aircraft for 3 seconds (until it does 1 beep). The front light should blink red.

- Press 3 keys on your remote to start the connection: the Pause button, the Fn button and the Custom button (which is bottom right, under the ‘camera’ shooter button). press them until the RC starts to beep continuously.

- Wait until the leds start to blink slowly red.

If you don’t want to link the RC with the aircraft but only your mobile, skip the step 5 to 9 and instead, connect you mobile on the aircraft’s wifi.

If not working, do it again but skip step 3 to 9. If DJI GO 4 is prompting you to switch the WLAN settings because you fly in another country, click CANCEL! This is said to be caused by the flight simulator which is ‘flying’ the drone in China. It should be fixed if the drone has enough GPS signal and a new home position.

Video: https://youtu.be/GOi9WiS-PF8

Asked for a China Mainland Number

I didn’t test this yet, but keep it here, just in case… So far, I just click ‘Cancel’ when I am asked to switch the WLAN settings. If I accept by mistake, I am not able to connect to the aricraft WIFI anymore and has to do a complete reset as explained above.

- Uninstall DJI App

- Install a File Browser app on your phone (I used FX)

- Delete all folder and files you can see with the word DJI in it

- (Main Storage > DJI Folder), (Main Storage > dji.go.v4 Folder) and there may also be a DJI text file in Main Storage also.

- Reinstall the app

- Log into app and turn off BOTH China settings

- Restart App

- Turn on RC and AC and connect as normal

Fly with just the Remote Control

Yes, it’s doable! Connect the RC with the aircraft. Once done, move the left sticks bottom-right (pointing at 4h30) and the right stick bottom-left (pointing at 7h30). The motors will start to turn slowly but the aircraft won’t take off.

Next, push the left stick up to take off. Keep it down for a few seconds to land.

The button Pause can be used to immediately ‘stop’ and hover. The button RTH (Return To Home) should also work, but who knows where ‘Home’ is defined! (NB.: the home Point should have been recorded automatically when the aircraft was turned on.. But the GPS was possibly missing a signal. So, always check the status LED before flying: they should slowly flash green. Also note that the Home Point is redefined every 15m if the aircraft is following a subject (ActiveTrack) with Dynamic Home Point enabled).

Without a mobile connected, you won’t see any warnings, etc… The flight will be limited to ~30m (To be verified… )

https://www.djivideos.com/watch/84606e93-55cc-47d8-aec0-942e6ad354a9?autoplay=false&poster

NB: I am using this cable to connect the RC and my mobile: Lindy USB 2.0 Cable Type Micro B – USB cable – Micro USB B OTG. The red end must be connected to the RC and the black one on the mobile. it is the OTG end. Always plug the cable first into the mobile and only next the OTG end in the remote.

Use Gesture Mode

Once the aircraft turned on and the front Leds is solid red, tap twice the power button of the battery to enter the gesture mode. Keep the aircraft in front of your face, camera pointing at you.

The aircraft should beep twice and the front Leds should become solid green, meaning that it has succeeded in face detection. Otherwise retry to tap twice the power button of the battery and keep the camera well aligned in front of your face.

After face detection, the leds turn solid red and the aircraft will take off and hover. You can start doing gesture at ~0.7m of the aircraft. Check that the front Leds are solid green. Solid red means that the gesture mode has quit. Blinking yellow means that it’s not working fine. (Blinking red means that the aircraft will take a selfie or video).

Batteries

- Discharge the battery to 40%-65% if it will NOT be used for 10 days or more. This can greatly extend the battery life.

- Intelligent batteries will automatically discharge themselves after 10 days of non-use.

- DO NOT store the battery for an extended period after fully discharging it. Doing so may over-discharge the battery and cause irreparable battery cell damage.

- The battery will enter hibernation mode if depleted and stored for a long period. Recharge the battery to bring it out of hibernation.

- Remove batteries from the aircraft when stored for an extended period.

- Never over-discharge, as this may lead to battery cell damage.

- Battery life may be reduced if not used for a long time.

- Fully charge and discharge the battery at least once every 3 months to maintain battery health.

- Discharge a battery by turning on the aircraft and waiting until the battery is empty.

Calibration

The process can be tricky and looks like being freezed. But watch thist : https://youtu.be/df3ulddOqzU

Tutorials

- Beginners guide: http://www.wanderworx.com/dji-spark-beginners-guide

- RTH: https://store.dji.com/guides/how-to-use-the-djis-return-to-home

- You need to record first your Home Point with at least 10 satellite GPS connections.

- If the drone is between 3m to 20m from its home point then it will just land.

- If the drone is more than 20m away, then it’ll ascend to the set RTH height before heading back home.

- Official Tutorials, Spec and User Manual: https://www.dji.com/spark/info

- DJI GO 4 Manual: https://store.dji.com/guides/dji-go-4-manual

- A lot of materials: http://www.spark-help.com/Spark

Replace Propellers

You should notice that there is a difference between the propellers: 2 have white circles on top and two don’t.

The propellers with the white circles must be installed on the motors that have similar white marks on top.

Find lost DJI Spark

If you lose your spark, e.g. after a crash, there are various ways to find it:

Via your “Flight List”:

- Run “DJI GO 4” App

- Under “Enter Device”, there are 4 entries: Equipment, Editor, SkyPixel and Me… Click on “Me”.

- Click next on “Flight Record”

- You see your Flight Total time, Travel Distance, Flight Times, Top Distance, Top Altitude, Top Speed… And at the bottom, you can open your “Flight list”. Do that.

- Select your very last flight. You will enter a map

- If your GPS works, you should see you as a large blue dot, the path followed by your drone and its position as a red triangle

- Move toward the red triangle.

Via Find “My Drone”:

- Run “DJI GO 4” App

- In the upper-right corner, open the menu

- Select Find My Drone

- You will see a map with where you are (blue triangle) and where is your drone (red triangle)

- Click on the drone (red triangle) and you get a menu to make it beeps or lights if connected. You also see its coordinates

- Click on the coordinates. This is going to copy them into the clipboard.

- Open Google Maps and paste the coordinates. NB.: the separator must be a dot. Ex.: 50.894952, 4.341613

- If your GPS works, you should see you as a blue dot

- Move toward the target.

Via the map in Fly Mode:

- If the drone is still connected, and you see a mini map on your screen, as a picture in the first person view, open it.

- You should be indicated as a large blue dot and your drone as a red triangle.

- Move toward the red triangle.

Do Sphere photos

- Use DJI Media Maker on PC to create the Panoramique 360

- Drag and drop the folder, with the parts, from the sd card (<drive>:\DCIM\PANORAMA\100_xxx) onto DJI Media Maker and select Mode: Panorama 360°

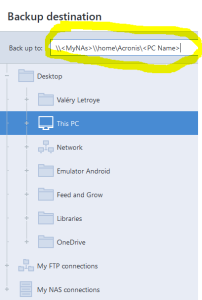

- Select the Output Folder. I pick a the “public” shared folder on my Synology as one has next to transfer the image on a mobile/tablet.

- + Tiny Planet Maker on Android: https://www.youtube.com/watch?v=_pMZFLYbqoU

- Transfer the panoramique image produced on your PC with DJI Media Maker and enjoy 😉

Or download the tiny planet directly from the DJI while connected and in “Fly mode”

- In the bottom-right corner of the DJI Go 4 app, whil ein Fly mode, there is a “play” icon. Press on ti.

- There, once can see the Library with videos, photos and panoramics including photospheres.

- Click on a photosphere to download the picture and create the globe.

- Look at the blue progress bar at the bottom of the screen for the progress. It can be very slow, first downloading all images and assembling them next (stitching Pano images)

- Do not go back to the library (arrow in the upper-left corner of the screen) during the download as progress would be lost. You may do it during the assembling part however.

- Once the download is done, the screen can become black with a spinning icon never stopping. Go back to the library and reopen the photosphere

- You can touch the photosphere to zoom-in but will not be able to zoom out manually

- Next you will be able to store it in the your local album of your phone (for edition) using the icon “download” in the bottom-right corner

Once photosphere downloaded from the DJI spark with the DJI GO 4 app, they can be opened with Theta+. This one is really great as it can create Ballon mirrors, tiny planets, animations, …

- Theta+ 1.6.1 for Android use to work also with photosphere created wtom a PC using DJI Media Maker : https://www.youtube.com/watch?v=0oAPxgZ1kP8&t=194s

- This version has 3 butons on Theta+’s home screen : Edit image, Animation and Timelapse. If not, you don’t have the right version

- Mirror APK: https://apkpure.com/theta/com.thetaplus

- But since my first attempts, it does not see any photos in my library anymore… It’s a pity because it was doing very nice animations.

Mode Fly Modes

Use DJI Go 4 Mod from here.

You will have access to all Mavick Pro’s fly modes, described here.

Take photos

When used Auto-Exposure, it can be useful to lock it. For that, touch the screen on the area to be used. A target with an opened lock appears and the exposure is adapted. Touch the lock and it will be closed. A message “Auto Exposure locked.” appears. Touch again somewhere to unlock the Exposure and recalculate for the selected area.

Legal information

European information : http://dronerules.eu/en

All legal Belgian information: https://mobilit.belgium.be/fr/transport_aerien/drones (No version [EN]).

A Belgian Brochure: https://mobilit.belgium.be/sites/default/files/resources/files/asil_2017_01_pilotage_de_drones.pdf (Version [EN]).

List of restricted area:

- https://www.dji.com/flysafe/geo-map

- in Belgium: https://droneguide.be

![]()